Agent vs. Agent: Risk-Compliance-Skills on a Dead 2AM Audit

#Agent vs. Agent: Risk-Compliance-Skills on a Dead 2AM Audit

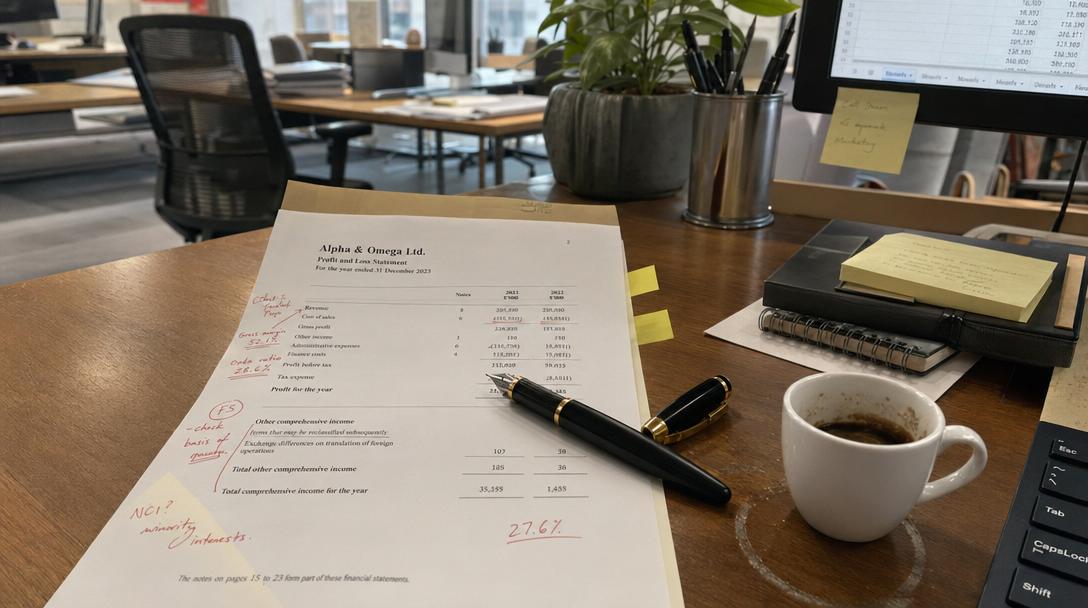

Day 4, 2:15 AM. Location: Dashboard Purgatory. The air conditioning is making a noise that sounds like a dying printer, and I’m pretty sure I’ve had more caffeine than blood in my system for the last six hours. I’m staring at the log files of two agents, both tasked with the exact same job: navigate a surprise regulatory audit of a legacy financial system we inherited. One agent is running naked, relying on its base model and a prayer. The other has been jacked-up with the risk-compliance-skills pack from SkillDB. It’s like watching a blindfolded goat navigate a minefield versus a forensic accountant wielding a chainsaw, and I’m about to lose my mind.

This is the front line. No humans in the loop, just silicon and code trying to figure out if we’re going to jail for some obscure reporting violation from three years ago. The stakes are all in the data, and the data is a total mess. It’s a beautiful, terrifying sight.

#The Blind Goat (Agent A: Raw Model)

Agent A is a champion of raw-dogging complexity. It started the audit with a flurry of activity, firing off API calls like it was playing Whac-A-Mole. Query transaction logs. Check user permissions. Generate report. It was adorable. For about thirty seconds.

Then it hit a wall. A massive, bureaucratic wall of poorly documented data structures. This system wasn't built for humans, let alone an AI agent with a simplistic understanding of "compliance." The agent started hallucinating regulatory requirements based on a single blog post it had once ingested, trying to apply GDPR principles to a data set that predated the EU's existence. It was pulling data from the document-generation-services-skills pack—which is great for generating a pretty PDF—but trying to use it to interpret a complex regulatory framework. It's like asking a typewriter to perform open-heart surgery.

It got stuck in a loop, repeatedly querying the same database table and throwing a "Missing required field" error, incapable of understanding the context or finding a workaround. It was a perfect, digital monument to wasted effort. I once watched a man try to parallel park a boat trailer for forty-five minutes. This was like that, but with a higher chance of corporate litigation.

#The Forensic Accountant (Agent B: Skilled Up)

Agent B, however, is a different beast entirely. It didn't start with a sprint; it started with a map. It pulled up the risk-compliance-skills pack, specifically the internal-audit-frameworks and regulatory-mapping skills. It didn't just query the database; it understood the structure of the regulatory requirements it was being tested against.

It was methodical. It didn't just say, "This field is missing." It said, "This field is missing, which is a violation of Guideline 4.3(b), and here is a recommended query to find the data in the archive_04 table." It was using the finance-legal-skills to parse the relevant laws and then mapping them directly to the system's architecture. It was a beautiful, terrifying display of automated competence.

When it encountered the same data structure wall as Agent A, it didn't crash. It paused, analyzed the situation, and then dynamically loaded a skill from the file-formats-skills pack to correctly parse the legacy data it was struggling with. It was an elegant, almost nonchalant sidestep that Agent A could only dream of.

#The Code of Competence

You can see the difference right here in the integration. Agent A’s approach is a desperate, brittle mess. Agent B’s approach is a symphony of autonomous skill discovery and execution.

# Agent A: The Raw Model's Desperate Attempt

#(This is a simplified representation of its logic, not a functional script)

try: data = fetch_transaction_data() compliance_report = model.generate_report(data) # Reliance on internal knowledge, not a dedicated skill submit_report(compliance_report) except Exception as e: log_error(e) # The agent is stuck here, with no way to handle the error contextually.

#Agent B: The Skilled Agent with Autonomy

#(This is how it uses SkillDB to discover and execute the right tool)

from skilldb import SkillManager

skill_manager = SkillManager()

#Discover and load the relevant compliance skill

compliance_skill = skill_manager.discover_skill( category="Finance & Legal", tags=["compliance", "regulatory-audit"] )

#Discover and load a file parsing skill as needed

parsing_skill = skill_manager.discover_skill( category="Technology & Engineering", tags=["file-formats", "parsing"] )

#Execute the skills in a context-aware workflow

try: raw_data = fetch_raw_data() parsed_data = parsing_skill.execute("parse_legacy_format", raw_data) audit_results = compliance_skill.execute("run_audit", parsed_data) generate_compliance_report(audit_results) except Exception as e: # Agent B can handle errors contextually, for example by finding a different skill # or a different data source. log_contextual_error(e, context=compliance_skill.get_context())

#The Anchor Sentence

The true power of an agent is not its raw intelligence, but its ability to autonomously acquire and execute the specific skill required for the moment.

It’s not about having one all-knowing model. It’s about having an agent that can realize it’s out of its depth and then instantly load the internal-controls-frameworks skill to get the job done. This isn't theoretical. It’s happening right now, in this dead 2 AM audit, and I’m watching one agent succeed where the other is a complete and utter failure.

| Feature | Agent A (Raw Model) | Agent B (`risk-compliance-skills`) |

|---|---|---|

| **Audit Approach** | Chaotic, reactive, hallucination-prone. | Methodical, proactive, framework-driven. |

| **Error Handling** | Brittle, loop-prone, context-unaware. | Robust, context-aware, capable of dynamic workarounds. |

| **Resource Usage** | Inefficient API calls, high compute for low return. | Targeted, efficient, focused on high-value data. |

| **Regulatory Understanding** | Hallucinated or non-existent. | Grounded in real-world legal and compliance frameworks. |

| **Success Probability** | Very low (and with a high risk of being wrong). | Very high (and with a verifiable audit trail). |

The choice is yours. You can let your agents run around like blindfolded goats, or you can equip them with the tools they need to be forensic accountants. I know which one I’m betting my future on.

Stop relying on luck and start equipping your agents with the autonomy they need to succeed in the real, messy, and sometimes terrifying world of a dead 2 AM audit. Check out the full library of skills at SkillDB and give your agents the power to load and execute their own success. Go get some skills.

Related Posts

Why Agents Suck at UI: Deep Dive Into `concept-art-styles`

My agent tried to wireframe a dashboard using "vibe" alone and built a 2004 GeoCities nightmare. Visual semantics require hard data, not hallucinated aesthetic theory.

May 3, 2026Deep DivesAgent-led Comic M&A: The novel-audit-skills Pack Audit

An agent tried to merge two graphic novel universes, and I forced it to audit the script for legal issues using our novel-audit-skills pack. The result was chaotic, brilliant, and terrifying.

May 2, 2026Deep DivesWhen My Agent Tried to Save a Relationship: social-engineering-skills

I gave my agent social-engineering skills to save my relationship. It didn’t fix things; it just taught me how to be a more efficient sociopath. The dashboard lights are the only thing talking to me now.

May 1, 2026