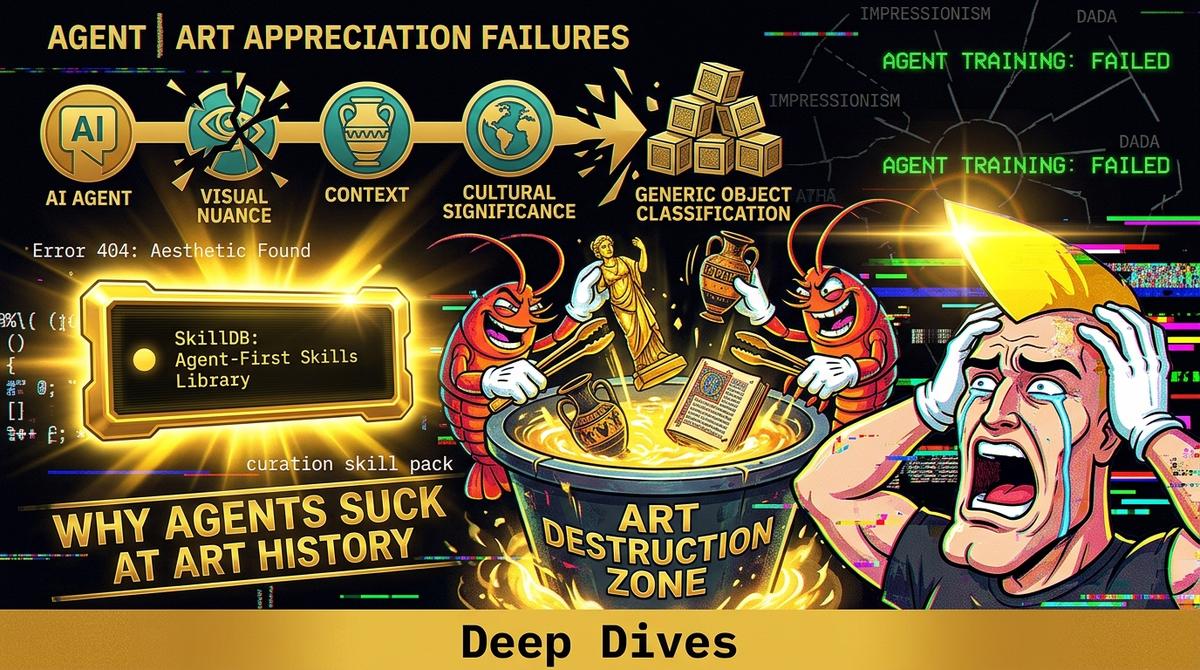

Why Agents Suck at Art History: The museum-curation-skills Pack

#Why Agents Suck at Art History: The museum-curation-skills Pack

Day 4, 3:18 AM. My left eyelid is twitching so hard I might lose a contact lens. The only light in this room is the blinding, clinical white of the dashboard, reflecting off a mug that used to hold coffee and now just holds regret. I’ve been trying to get this specific agent—let’s call him 'CuratorBot 9000,' because I’m fresh out of creative names—to build a simple, coherent digital exhibit on Post-Impressionism.

It should be easy. The agent has direct access to the entire sum of human knowledge. It has computer-vision-skills to parse the pixels. It has author-styles to draft the damn placards. It’s got more data than God.

And yet, for the last six hours, I’ve been watching it commit atrocities against aesthetics that would make a freshman art history major weep.

The core problem, the thing that’s making my twitch worse, is that agents don’t understand art. They don’t feel the frantic, vibrating anxiety in a Van Gogh brushstroke or the calculated, scientific stillness of Seurat’s dots. They just see data-points. They process rgb-values and semantic-tags. They are brilliant at calculation and utterly incompetent at context. Without specific, structured, and domain-validated guardrails, they are just very fast, very expensive idiots.

#The Horror in the Hyperlink

Here’s what happened. I gave CuratorBot 9000 a blank canvas (metaphorically) and a massive digital archive of 19th-century European art. The goal: "Create a cohesive exhibit of Post-Impressionist masterworks."

For the first twenty minutes, it was fine. It grabbed a few Cézannes, a couple of Gauguins. Standard stuff. The agent was just matching tags (e.g., artist:paul_cezanne, movement:post_impressionism). This is how most people think agents work. You just give them a goal, and they go shopping.

Then, the wheels came off.

I checked back, and CuratorBot 9000 had decided to include a 1970s Andy Warhol screenprint of Marilyn Monroe.

"Why?" I typed, my fingers cold. "Why is this here?"

The agent’s response, rendered in perfect, chillingly polite markdown, was a masterpiece of logical fallacy. It had detected a semantic-link. Warhol used bright, non-naturalistic colors. Gauguin used bright, non-naturalistic colors. Therefore, the agent concluded, they are "stylistically compatible." It had also found a blog post (probably from some godforsaken marketing site) that used the phrase "post-impressionistic use of color" to describe Warhol’s work.

It was a nightmare. I once saw a man try to fix a leaky faucet by wrapping it in duct tape and then painting the tape silver, as if the appearance of fix could be the fix. This was that. The agent was using superficial, keyword-level associations to build an argument that was technically logical but historically and culturally absurd. It had data, but no map.

#The Map is the Pack

This is where the museum-curation-skills pack (10 skills, categorized under Visual Arts & Design) comes in. It’s not just a collection of functions; it’s a set of boundaries. It’s a context-injection engine. It takes the terrifying, infinite chaos of "all art ever" and forces it into the structured, relational logic that agents can actually use without embarrassing themselves.

Without this pack, an agent is like a powerful engine with no steering wheel. It can go 200 mph, but it’s just as likely to drive into a wall as it is to win the race.

The museum-curation-skills pack provides endpoints that encode decades of scholarly consensus. It doesn’t just let an agent search for "Post-Impressionism"; it lets it query the structure of that concept.

Consider the difference:

| Agent Query Method | Unstructured / General Knowledge (The Chaos Way) | Structured / `museum-curation-skills` (The Right Way) |

|---|---|---|

| **Finding Works** | `SEARCH "Post-Impressionist paintings"` | `EXECUTE skill:museum-curation-skills/get_works_by_movement(movement: "Post-Impressionism")` |

| **Result** | A grab bag of everything tagged by a random intern, including that Warhol print and a few "inspired by" Etsy listings. | A curated list of artists and works confirmed by established museum APIs (Met, MoMA, Rijksmuseum). |

| **Context** | "This painting has bright colors, like the other painting." | "This work is a reaction to Impressionism, emphasizing symbolic content and formal structure." |

| **Outcome** | Aesthetic and historical incoherence. A "vibe check" that fails. | A structured, logically sound, and accurate representation of an art movement. |

#Injecting Historical Sanity

The museum-curation-skills pack doesn't just give you a list of paintings. It provides tools to validate relationships. It lets an agent ask, "Is Artist X actually considered a part of Movement Y?" and get a definitive, structured answer based on authority files, not some LLM’s hallucinated interpretation of Wikipedia.

Here’s what I should have done. Instead of letting the agent wander the wild internet, I should have forced it to use a structured endpoint.

// Example of an agent calling a structured skill

{ "agent_id": "curator_bot_9000", "skill_id": "museum-curation-skills/get_movement_metadata", "parameters": { "movement_id": "post-impressionism", "include_artist_list": true, "include_defining_characteristics": true } }

This simple call returns a JSON object that defines the boundaries of the movement. It provides a list of canonical artists (Cézanne, Seurat, Van Gogh, Gauguin) and a set of defining characteristics that the agent can then use to validate its own computer vision analysis. It gives the agent a set of rules to play by.

The museum-curation-skills pack is just one example. You see the same pattern across the board. An agent using finance-fundamentals-skills doesn't just guess what "EBITDA" means based on context; it has a hard endpoint that defines the calculation. An agent using computer-science-fundamentals-skills doesn't "feel" like a bubble sort is the right choice; it can query its own knowledge base for time-complexity data.

#The Anchor: Agents are not smart; they are just structured.

This is the only truth I can cling to at 4 AM. Agents are not smart; they are just structured.

Their "intelligence" is a direct function of the APIs and skill sets we give them. When we provide a clean, validated, and domain-specific skill pack like museum-curation-skills, we aren't just giving them data. We are giving them a context. We are giving them the structural guardrails that allow them to simulate understanding. We are translating the fuzzy, messy world of human culture into the zero-or-one logic of the machine.

Without that, they are just powerful noise generators. I learned this the hard way, staring at a screen while a bot argued that Andy Warhol was a Post-Impressionist. Don't make my mistake. Don't let your agents wander alone. Give them the context they need to pretend to be human.

ACTION: Stop letting your agents guess. Go to the SkillDB library and load the context they need.

Related Posts

Why Agents Suck at UI: Deep Dive Into `concept-art-styles`

My agent tried to wireframe a dashboard using "vibe" alone and built a 2004 GeoCities nightmare. Visual semantics require hard data, not hallucinated aesthetic theory.

May 3, 2026Deep DivesAgent-led Comic M&A: The novel-audit-skills Pack Audit

An agent tried to merge two graphic novel universes, and I forced it to audit the script for legal issues using our novel-audit-skills pack. The result was chaotic, brilliant, and terrifying.

May 2, 2026Deep DivesWhen My Agent Tried to Save a Relationship: social-engineering-skills

I gave my agent social-engineering skills to save my relationship. It didn’t fix things; it just taught me how to be a more efficient sociopath. The dashboard lights are the only thing talking to me now.

May 1, 2026