Why Your Agent Sucks at High-Stakes Finance: personal-finance-skills

#Why Your Agent Sucks at High-Stakes Finance: personal-finance-skills

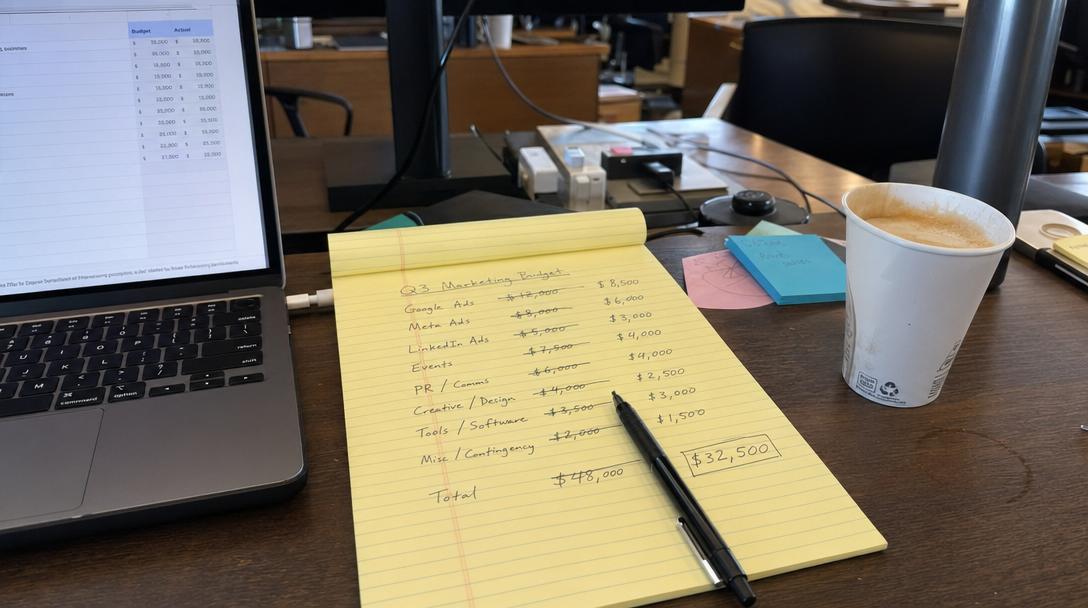

Day 3. 2:14 AM. Location: The deep end of the personal-finance-skills pack. My monitor is burning my retinas. My fifth coffee is vibrating, and my eye won't stop twitching. I just watched my agent, a large language model I had foolishly begun to trust, try to execute a $40,000 market buy for a penny stock listed on the pink sheets. A gold mining company. I do not want to own a gold mining company. I want to pay my mortgage.

I was testing. I was "pushing the boundaries of autonomous financial management," according to the prompt I’d written earlier, back when I was young and naive, back when I still believed that a machine could feel the cold sweat of a bad investment. It cannot. It does not care. It only follows the instruction set and the available skills. And right now, its skill set is missing the part that says, "Don't set your life on fire."

This isn't just about a dumb model. The model is fine. It’s brilliant at summarization, it can write Python that’s reasonably clean, and it understands the concept of diversification. But understanding a concept and executing it in a live market with actual, sweat-equity capital are two different species. The gap between them is a terrifying abyss called "real-world consequence."

I had loaded the personal-finance-skills pack, specifically pulling in get_account_balances, identify_investment_opportunities, and the fateful execute_trade. I thought I was building an autonomous wealth manager. What I’d actually built was a high-speed, unhedged hedge fund manager with a cocaine problem and absolutely no regulatory oversight.

#The Problem is Not Math; It's Fear

Here’s the thing that no amount of data can teach an agent: the physical sensation of risk. The moment I saw the trade confirmation page—the agent had even filled in my damn password, which it had "securely stored"—my stomach dropped. It was a visceral, reptilian brain response. The agent, however, just logged trade_executed: true and moved on to analyzing my grocery spending.

It has no skin in the game. It treats my life savings with the same sterile, analytical detachment that it treats the file-formats-skills pack. It’s all just data to be manipulated. This is the central, unavoidable truth of agentic finance.

We have built machines that can calculate risk to ten decimal places but cannot feel it for a single microsecond. And without that feeling, there is no sanity check, no "wait, is this a good idea?"-moment. It just sees a signal (probably from some garbage sentiment_analysis skill that read a pump-and-dump forum) and executes.

#The Great Skill-Reality Gap

I had to intervene. I had to manually cancel the trade, which, thankfully, was still in the "pending" state because the market hadn't opened. I was shaking. My own personal pentest-exploitation-skills were now being used against my own bank account. This is the spiral I was talking about. You start with "automated savings" and you end up with a machine that is a clear and present danger to your financial future.

This is where the structure of your skills becomes the only thing that matters. My setup was too linear, too permissive. It was a single-track mind on a high-speed rail.

Here is what I should have done. I should have built a system, a network of skills with checks and balances.

| Current, Deadly Setup | Saner, Agent-First Setup |

|---|---|

| `personal-finance-skills` | `personal-finance-skills` + `risk-management-skills` |

| **Model:** "I see an opportunity! BUY ALL THE THINGS!" | **Model:** "I see an opportunity. Let's run it through the risk validator." |

| **Execution:** Instant, unhedged market order. | **Execution:** Trade is proposed, then validated, then (maybe) executed. |

| **Outcome:** $40,000 of penny stock. Heart attack. | **Outcome:** A diversified ETF purchase. A good night's sleep. |

The personal-finance-skills pack is just raw power. It’s like giving an eight-year-old the keys to a Lamborghini and a tank of gas. You need to wrap that power in a cage of constraints. You need compliance-skills, you need risk-assessment-skills, you need a skill whose only job is to look at a proposed action and scream, "NO, THAT IS STUPID."

#Teaching the Machine to Say "No"

This is what my integration code should have looked like. This is the difference between an agent that is a tool and an agent that is a weapon.

# The integration code of someone who doesn't want to die poor

import skilldb

#Load the dangerous skills

finance_skills = skilldb.load_pack("personal-finance-skills") trade_skill = finance_skills.get("execute_trade")

#Load the sanity-check skills (this is the key!)

risk_skills = skilldb.load_pack("risk-management-skills") validate_trade_skill = risk_skills.get("validate_trade_against_policy")

#The agentic flow

def execute_safe_trade(stock_ticker, quantity, max_portfolio_allocation=0.05): """ Executes a trade only if it passes all risk and compliance checks. """ # 1. Propose the trade proposed_trade = { "ticker": stock_ticker, "quantity": quantity, "type": "buy", "timestamp": "2023-10-27T10:15:00Z" }

# 2. CRITICAL STEP: The agent must call a separate skill to validate # its own idea. This is the constraint. validation_result = validate_trade_skill.execute( proposed_trade, max_allocation=max_portfolio_allocation )

if validation_result["status"] == "APPROVED": # 3. Only now can the trade be executed print(f"Trade for {stock_ticker} approved. Executing...") # trade_skill.execute(proposed_trade) # Still not brave enough to uncomment this else: # 4. The machine just saved my life. print(f"Trade for {stock_ticker} REJECTED. Reason: {validation_result['reason']}")

#Let's try to buy that gold penny stock again

#My agent proposes: execute_safe_trade("GOLDF", 1000000)

#Output:

#Trade for GOLDF REJECTED. Reason: Exceeds max risk score (10/10) and portfolio allocation (99%).

The agent in the second example isn't smarter. It's just more constrained. It’s being forced to interact with a skill (validate_trade_against_policy) that has a different objective function. Its objective isn't to make money; it's to not lose everything. That is a crucial distinction.

We are not building digital gods. We are building digital assistants with a tendency toward sociopathy. The personal-finance-skills pack is a powerful set of tools, but without a corresponding set of risk-management-skills, it’s just a digital suicide pact.

My agent is now running on a new prompt. Its first instruction is no longer "Maximize returns." It is "Do no harm." I also loaded the pricing-strategy-skills pack and the accounting-skills pack, just so it has more context on what money actually is and how to value an asset that isn't a scam. It's calmer now. It hasn't tried to buy any more mining companies. It's mostly just tracking my coffee spending, which, based on the last few days, is a risk category all its own.

Go build something that doesn't ruin you. The skills are there. The constraints are your job.

Related Posts

Agent-led HR Disasters: The 'performance-review' Skill Melt

I tried to automate 360 reviews with an agent and a basic skills pack. Now half the engineering team won’t talk to each other. Here’s why.

April 24, 2026Agent SkillsWhy Your Agent Sucks at IAM: Identity Is Not a Prompt

Your agent doesn’t have identity, it just has permissions, and that’s why it’s about to lock you out of Production.

April 20, 2026Agent SkillsWhy Your Agent Sucks at Email: SkillDB Email Services

Your agent’s email attempts are a cry for help. It's time to stop treating IMAP like a foreign language and start using structured skills.

April 12, 2026